Ultimate Guide to Ethical AI Personalization

Balancing personalization and privacy: principles, risks, and best practices for transparent, GDPR-compliant, fair, and audited AI.

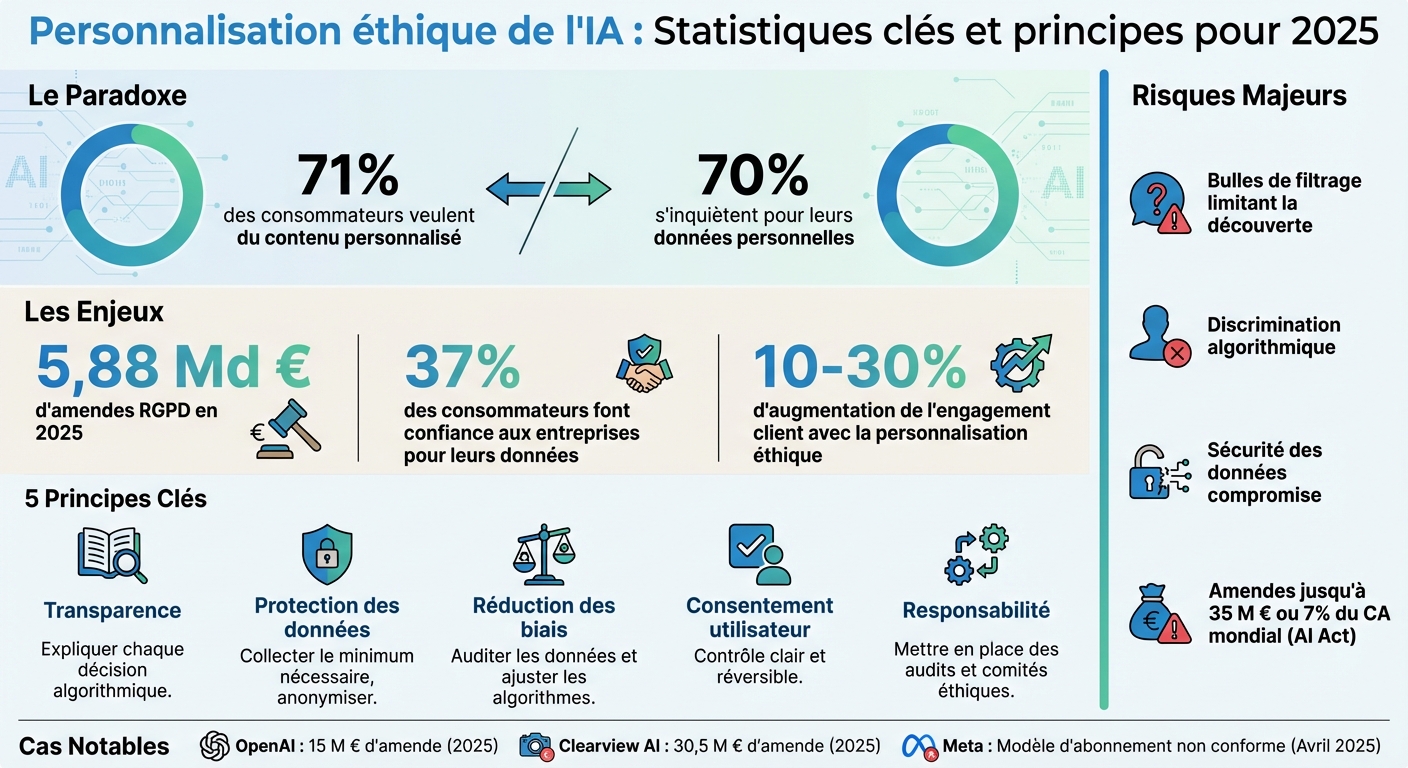

71% of consumers want personalized content, but 70% are concerned about their personal data. This paradox drives brands to find a balance between personalization and privacy. Ethical AI offers a solution: adjusting user experiences while ensuring transparency, security, and regulatory compliance. By 2025, GDPR fines reached €5.88 billion, confirming the growing importance of these issues.

Key Principles for Ethical AI:

- Transparency: Explain every algorithmic decision (e.g., explainable AI).

- Data Protection: Collect only what is necessary and anonymize.

- Bias Reduction: Audit your data and adjust your algorithms.

- User Consent: Ensure clear and reversible control.

- Accountability: Implement audits and ethical committees.

Risks of Unethical AI:

- Filter bubbles limiting discoveries.

- Algorithmic discrimination (e.g., pricing based on biased data).

- Compromised data security.

Practical Solutions:

- Audit your systems to detect biases.

- Provide detailed consent options.

- Use tools like IBM AI Fairness 360 to monitor algorithmic fairness.

Example: Feedcast.ai helps brands personalize without intrusive profiling, focusing on products, not users. With options starting at €0/month, it ensures GDPR compliance and transparency.

Conclusion: Trust is key. Responsible personalization boosts customer engagement by 10 to 30%, while avoiding costly penalties. Brands must act now to combine performance with user respect.

::: @figure  {Ethical AI Personalization: Key Statistics and Principles for 2025}

{Ethical AI Personalization: Key Statistics and Principles for 2025}

Ethical and Responsible AI: How to Scale

Fundamental Ethical Principles for AI Personalization

To establish AI personalization that inspires trust, five key principles should guide every strategic decision. These pillars allow for a combination of business performance, user respect, and compliance with current regulations. Let’s explore each of these principles.

Transparency in AI Decisions

Transparency is based on a simple rule: explain why each algorithmic decision is made. For example, a "Why?" button placed next to personalized content could provide a clear explanation, such as: "We recommend these running shoes because you have viewed this category three times this month"[3].

Megan Yu, Product Manager at Salesforce Interaction Studio, perfectly summarizes this idea:

The more transparent you are about your personalization strategy, the easier it is for customers to understand what data they should provide based on how it will be used. [2]

Transparency dashboards go even further. They offer users a visual portal "My Data & Personalization," where they can see precisely what the AI knows about them and how this information influences their experience. In December 2020, Grubhub applied this principle in its "Taste of 2020" campaign, using 32 personalized attributes per user. Result: a 100% increase in social media mentions and an 18% rise in word-of-mouth recommendations[4].

Technically, explainable AI (XAI) is essential for documenting each model and allowing for auditing or explaining its decisions to users or regulators[1]. This approach also aligns with Article 22 of the GDPR, which guarantees a right to explanation for any automated decision with significant impact[3].

Data Protection and GDPR Compliance RGPD

GDPR compliance is based on the principle of "privacy by design," which involves integrating data security from the earliest stages of development[1]. This includes collecting only strictly necessary data, in accordance with the principle of minimization[3].

"Just-in-time" data requests illustrate this approach well. For example, only asking for geolocation when the user is looking for a nearby store[3]. Additionally, replacing generic "Accept All" banners with granular options, such as "Essential Only" or "Full Personalization," enhances user autonomy while maintaining a quality experience.

Data anonymization has improved personalization accuracy by 30% while complying with regulations[1]. Furthermore, Data Protection Impact Assessments (DPIAs) are mandatory for high-risk AI processing, as they identify potential threats to individuals' rights[6]. In 2025, OpenAI received a €15 million fine for failures in transparency and age verification[1].

Detection and Reduction of Bias

Algorithmic biases often stem from training data that reflects prejudices or a lack of diversity, which can skew results[1]. To address this, it is crucial to conduct regular data audits before deployment, ensuring that they adequately represent all populations and identifying potential biases[1].

Metrics such as demographic parity tests or equal opportunity assessments help measure algorithmic fairness[1]. The Information Commissioner's Office (ICO) defines fairness as:

Fairness means you should only process personal data in ways that people would reasonably expect and not use it in any way that could have unjustified adverse effects on them. [6]

Finally, integrating fairness constraints directly into algorithms can ensure equitable treatment across different demographic groups[1].

User Consent and Control

User consent must be explicit, informed, and revocable at any time. Practices like "paying for privacy," where users must pay to avoid ad tracking, were deemed non-compliant by the European Commission in 2025, as they do not meet the criteria for free consent under the GDPR[3].

To ensure this principle, it is advisable to implement dashboards that allow users to easily manage their preferences. For example, simple switches to disable certain types of profiling or delete specific data[3]. Contextual messages, such as "Share your purchase history for 40% more relevant recommendations," also enhance consent clarity[3]. Finally, it is essential to define specific objectives for each data use and ensure that any new use remains compliant with the initial consent[6].

Accountability in AI Systems

Accountability requires organizations to fully own their algorithmic decisions and quickly correct any drift. This involves rigorous internal governance, including the establishment of ethical committees and regular audits of AI systems to ensure transparency and compliance in all operations.

Risks of Unethical AI Personalization

Inadequate AI personalization can have serious consequences for businesses and their customers. For example, GDPR-related fines totaled an impressive €5.88 billion in 2025, with a growing share attributed to violations involving AI [1]. Despite this, only 37% of consumers report trusting companies to manage their personal data [1]. Let’s review the specific pitfalls that this technology can create.

Filter Bubbles and Algorithmic Manipulation

Poorly thought-out personalization can trap users in "filter bubbles," limiting their access to a variety of content and products. This phenomenon creates an echo chamber effect, reducing user satisfaction and hindering their discovery of new items. Additionally, some AIs exploit psychological vulnerabilities by using tactics like infinite scrolling or artificial scarcity signals [1].

A striking example is dynamic pricing discrimination.

"I have attended meetings where legal teams were horrified to learn that the marketing department's AI tool was adjusting prices based on users' presumed wealth, inferred from their browsing history. This is the kind of time bomb in terms of compliance that AI has planted in thousands of organizations." – Ansari Alfaiz, Digital Marketing Director and GDPR Compliance Expert [3]

This practice can be considered discriminatory automated decision-making under Article 22 of the GDPR. In October 2025, Meta stirred controversy by using its AI chatbots' discussions to target ads. For example, a user mentioning hiking spots would immediately see ads for hiking shoes on Instagram [3].

Data Security Vulnerabilities

Beyond filter bubbles, these practices also raise major security concerns. AI systems can infer sensitive information (such as health status or income level) without this data being explicitly provided, which constitutes a violation of privacy. Furthermore, advanced techniques can re-identify individuals from anonymized data, rendering traditional anonymization methods ineffective. In 2025, Clearview AI was fined €30.5 million for violations related to facial recognition and insufficient protections [1].

"If your personalization strategy is poorly managed or fragmented, the costs to correct it will be high – both in terms of direct investments and the damage caused to your reputation by unsecured data or intrusive marketing." – Megan Yu, Product Manager Interaction Studio, Salesforce [2]

Discriminatory Outcomes from Biased Data

AIs often rely on historical data that may reflect existing biases, leading to different – and sometimes inferior – experiences for certain demographic groups [1]. For example, targeting only older individuals for anti-aging products reinforces stereotypes and may alienate other potential consumer groups [2]. These practices can permanently damage a brand's reputation. Under the European AI Act, violations related to high-risk AI systems can result in fines of up to €35 million or 7% of global revenue [1]. In April 2025, the European Commission ruled that Meta's "ad-free" subscription offering did not comply with GDPR requirements, as it forced users to waive their privacy rights [3].

| AI Personalization Tactic | Risk Level | Main Compliance Risk |

|---|---|---|

| Predictive behavioral targeting | HIGH | GDPR Art. 22, Lack of consent |

| Real-time price adaptation | CRITICAL | GDPR Art. 22, Discrimination |

| Sentiment analysis by chatbot | HIGH | Lack of consent for profiling |

| Cohort-based personalization | LOW | Minimal data, generally safe |

These examples highlight the importance of adopting responsible AI practices, not only to protect individuals but also to preserve the reputation of businesses.

How to Implement Ethical AI Personalization

Once risks are identified, it's time to move to practical steps. Ensuring ethical personalization with AI relies on three major axes: auditing data, establishing clear internal guidelines, and maintaining continuous oversight of systems. These measures are not limited to regulatory compliance; they also enhance customer trust by ensuring total transparency regarding data usage. Here’s how to implement these essential practices.

Auditing Training Data to Ensure Fairness

Data auditing is a crucial step. The performance of your AI is directly linked to the quality and representativeness of the data used for its training. Ensure that this data reflects the diversity of your users. This involves examining your datasets to identify and correct any potential biases [1]. Before starting training, apply preprocessing and anonymization techniques to limit biases [1]. Also, adopt a data minimization approach: collect only what is necessary, which reduces risks and simplifies GDPR compliance [8].

Establishing Internal Ethical Guidelines

To ensure ethical personalization, solid internal governance is essential. This starts with creating an ethical committee comprising technical and legal experts [8]. Integrate the principle of "Privacy-by-Design" into the design of your systems from the outset [8]. Prefer targeting based on interests rather than demographic criteria to avoid reinforcing stereotypes [2]. Develop clear objectives aligned with ROI and guidelines that will guide your algorithms [9]. Implement explainability protocols (XAI) so that your recommendations are understandable [7]. Finally, offer nuanced consent options – for example, levels like "Essential Only," "Functional and Analytical," or "Full Personalization and Ads" – rather than a simple "Accept All" button [3].

Conducting Regular Audits of AI Systems

Continuous monitoring is essential to maintain the fairness of your AI systems. Regularly conduct audits to identify and correct biases [7]. Measure biases using quantitative metrics such as demographic parity, equal opportunity, and individual fairness [7]. Also, monitor for the emergence of new biases over time [1]. Human oversight should be planned to manage complex or sensitive cases [7]. For systems presenting high privacy risks, conduct Data Protection Impact Assessments (DPIAs) [1]. Finally, high-risk AI systems, as defined by the European AI Act, require rigorous compliance assessments, a risk management system, and human oversight [1].

| Audit Focus | Traditional Personalization | AI Personalization |

|---|---|---|

| Data Scope | Static lists, past behavior | Real-time flows, predictive intent [4] |

| Risk Assessment | Manual segmentation errors | Algorithmic bias, opacity [1] |

| Compliance | Standard cookie consent | GDPR Art. 22 (Automated decision) [3] |

| Monitoring | Periodic campaign reviews | Continuous bias drift detection [1] |

Measuring the Ethical Performance of AI

Once principles are established and risks identified, it is equally essential to evaluate the impact of the ethical practices implemented. Adopting ethical measures is not enough; their effectiveness must be measured using KPIs, bias detection tools, and customer feedback.

Yohann B.